Natural language processing has made remarkable advancements in recent years thanks to innovative language models such as BERT vs. GPT. These models have enabled the creation of sophisticated programs capable of interacting with human language, revolutionizing the way we communicate with technology. With GPT's introduction, the rivalry between language models that power AI-based content emerged.

This article will explore the significant differences between the most popular language models, GPT and BERT. Moreover, we will also explore the best PDF tool with AI, such as UPDF, to see its capabilities and effectiveness when used along with these tools.

Windows • macOS • iOS • Android 100% secure

Part 1. Overview of GPT and Its Use Cases?

The full form of GPT is Generative Pre-trained Transformer, an AI language model that uses deep learning to generate human-like language and content. The GPT models are trained on large amounts of text data to learn the patterns and relationships in language and then develop the new text based on the learned patterns.

GPT-3 is the most prominent version of the GPT series, launched in June 2020 by OpenAI. It is an autoregressive language model with 175 billion parameters, making it one of the largest language models ever constructed.

Use Cases of GPT

- Language Translation: GPT-3 can be used for language translation. It translates text from one language to another with high accuracy.

- Content Creation: Used to generate content such as articles, blog posts, and product descriptions. However, it can also be used to create summaries of long documents.

- Question Answering: Able to answer questions posed by users. Assist in providing answers to general knowledge questions and questions related to a specific domain.

- Text Completion: Often referred to as text completion tool. For example, GPT auto-completes emails, social media posts, and text messages.

Part 2. Overview of BERT and Its Use Cases?

BERT (Bidirectional Encoder Representations from Transformers) is a popular language model developed by Google AI. When making predictions, a bidirectional transformer model considers a word's left and right context. BERT is designed to help with multiple natural language understanding (NLU) tasks, such as question answering, sentiment analysis, and named entity recognition.

It uses a pre-training approach that involves unsupervised learning to train a model on large amounts of text data. This pre-training approach enables BERT to understand the context of a word better, and it is one of the reasons why BERT has become so popular in the NLP community.

Use Cases of BERT

- Google Search Engine: BERT has been integrated into Google's search engine to help better understand user queries and deliver more relevant search results.

- Huggingface Transformer Library: One of the models included in the Huggingface Transformer Library, a popular open-source library for natural language processing.

- Microsoft Azure Cognitive Services: Also used in Microsoft's Azure Cognitive Services, which provides a suite of AI-powered tools for developers.

- Google Natural Language API: This API uses BERT to power its sentiment analysis and entity recognition features.

Also Read: GPT-4 vs. GPT-3.5, Which Version is Better?

Part 3. Similarities Between GPT-3 and BERT

The GPT 4 vs. BERT are two of the most powerful natural language processing models in the field of machine learning. Each of these AI language models has unique features and characteristics that make them stand out. However, despite their differences, these two models share some similarities in design and capabilities. In this regard, below we can note some similarities between both:

Architecture

GPT and BERT use the Transformer architecture, a neural network architecture designed to learn contextual relations between words in a text using attention mechanisms. The attention mechanism allows the model to focus on specific parts of the text that are more relevant to the context of a given task.

Learning Method

Another similarity between GPT vs. BERT is that they are both unsupervised learning models. That means they do not require labeled data for training. Instead, they are trained on massive amounts of unstructured text data, which makes them capable of learning the patterns and structures of natural language.

NLP Tasks

GPT and BERT can perform various NLP tasks, such as question answering, summarization, or translation, among others. While both models have shown impressive results in multiple tasks, the degree of accuracy may vary depending on the specific task and the size of the training dataset.

Part 4. Main Differences Between GPT-3 and BERT?

GPT vs. BERT are two of the most popular natural language processing models used in various tasks. While they share certain similarities, such as being based on transformer architecture, significant differences between the two models set them apart. Have a look at an array of differences they both offer:

| Features | GPT-3 | BERT |

|---|---|---|

| Architecture | The autoregressive model generates text by predicting the next word in a sequence based on the preceding words. | The bidirectional model considers both left and right contexts when making predictions. |

| Training Data | Trained on 45TB of data from sources such as books, articles, and web pages. | Trained on 3TB of data from sources such as Wikipedia and BookCorpus. |

| Parameter Size | 175 billion parameters, significantly larger than any other language model. | 340 million parameters, relatively smaller than GPT-3. |

| Fine-Tuning | It can be fine-tuned on a wide range of tasks with a few-shot learning approach. | Requires a larger amount of training data and more fine-tuning to achieve good performance. |

| Inference Speed | Slower inference speed due to its larger size and more complex architecture. | Faster inference speed than GPT-3 makes it better suited for real-time applications. |

| Task Performance | Showed remarkable performance on a wide range of natural language processing tasks. | Achieved state-of-the-art performance on a range of tasks, including text classification. |

| Domain Adaptation | Can generalize to new domains with few-shot learning and adapt to new tasks with transfer learning. | Requires more fine-tuning and retraining to adapt to new domains and tasks. |

These differences highlight that while ChatGPT vs. BERT are powerful NLP models, they have unique characteristics that make them better suited for specific tasks. Considering these differences is essential when choosing which model to use for a specific application.

Part 5. Which One Will Conquer the Future AI Market, GPT or BERT?

When it comes to GPT 3 vs. BERT, both have their unique advantages and limitations. However, it appears that BERT is better positioned to dominate the future NLP market. One of the reasons for this is BERT's bidirectional architecture, which allows it to consider both the left and right context, enabling it to better understand the full context of a sentence or phrase.

This makes it particularly well-suited for tasks such as sentiment analysis, text classification, named entity recognition, NLU, and question-answering. Furthermore, BERT's relatively smaller size and faster inference speed make it a more practical choice for real-time applications that require quick and efficient processing. In fact, BERT has served as a foundation for the development of many derivative models.

In addition, the release of DistilBERT, a smaller version of BERT, further illustrates its versatility and impact. Nevertheless, the competition between GPT vs. BERT is still ongoing, and both models will likely continue to evolve and improve.

Part 6. Will GPT or BERT be Used in PDF Software?

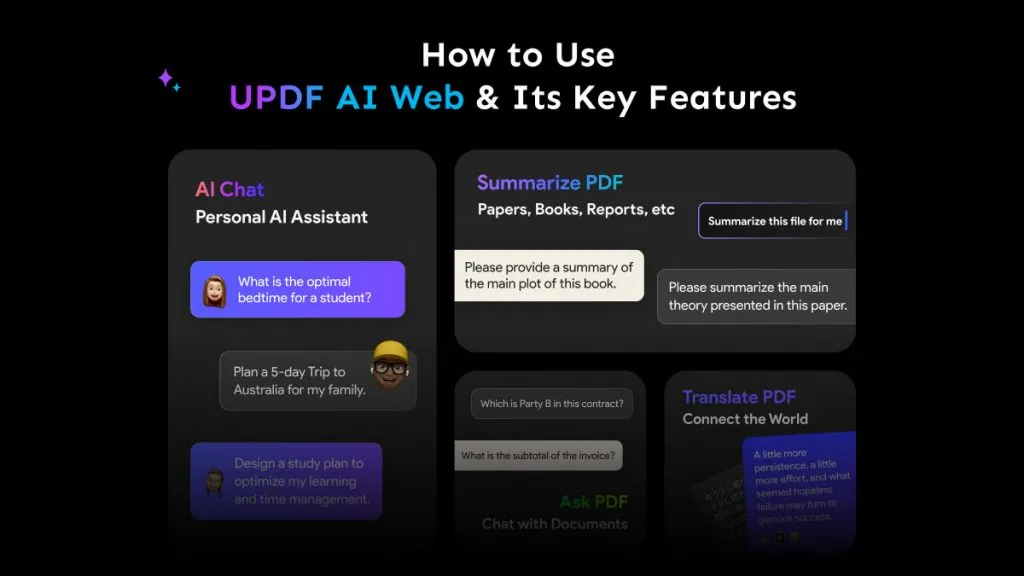

There is a possibility that GPT or BERT may be used in PDF software, but it ultimately depends on the specific goals and needs of the software developers. Some of the PDF software add integration with ChatGPT and offer features including summarizing PDFs, proofreading PDFs, rewrite PDFs. One example of a PDF editor tool that leverages ChatGPT is UPDF.

Windows • macOS • iOS • Android 100% secure

Moreover, you can also add the results obtained from UPDF AI directly into your PDF without damaging the original formatting of the document. Secondly, you can create perfect eBook content by checking content and grammar from UPDF AI.

UPDF AI is an AI that is the same as ChatGPT. It means you can chat in UPDF AI too. UPDF AI can answer any question you ask within seconds.

UPDF AI has two different modes to explain PDF content. You can choose a part of the file or the entire file content. This is convenient if you have different requirements. Summarizing a thousand page file in a few seconds is not a problem in UPDF AI.

Key Features of UPDF That Stand Out

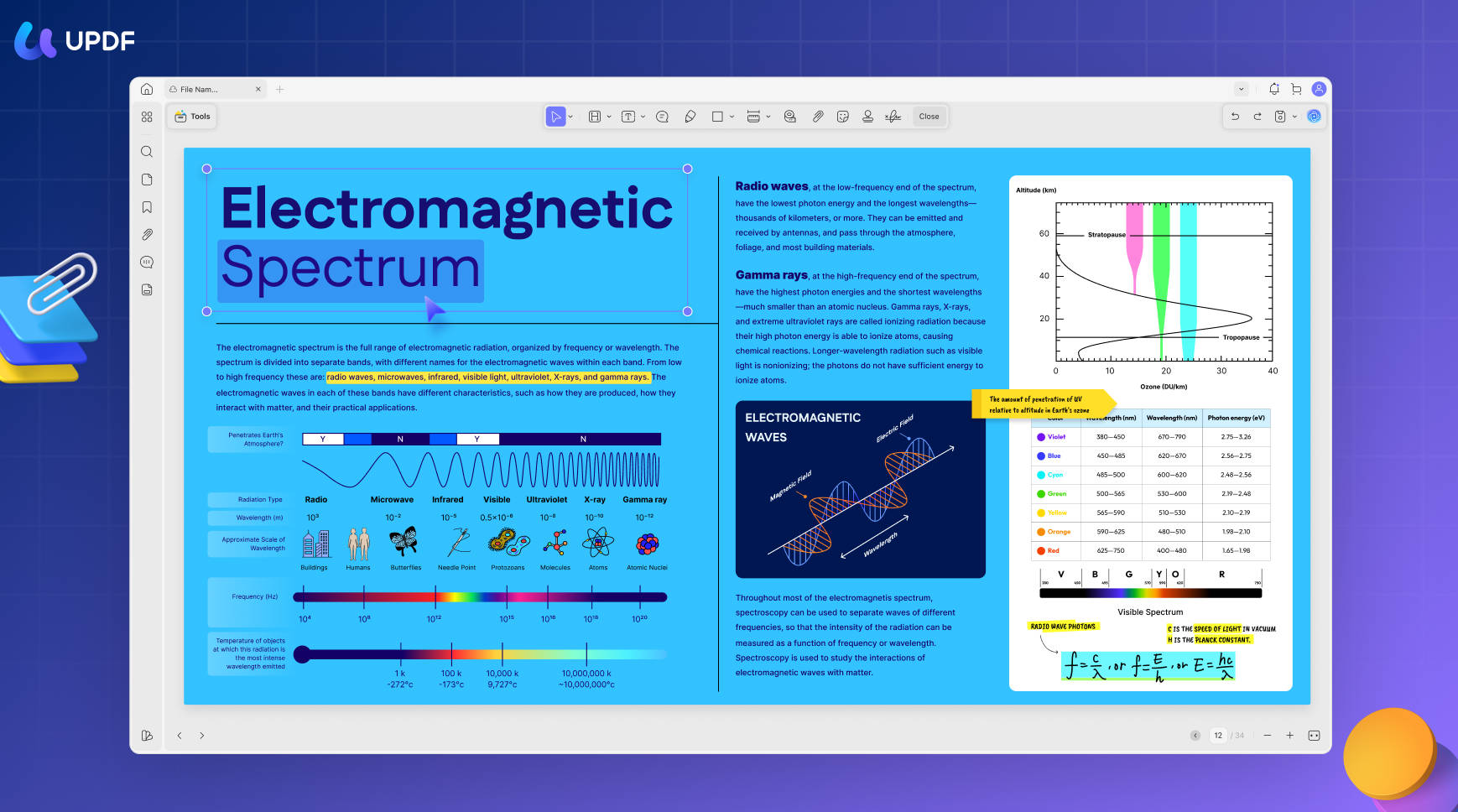

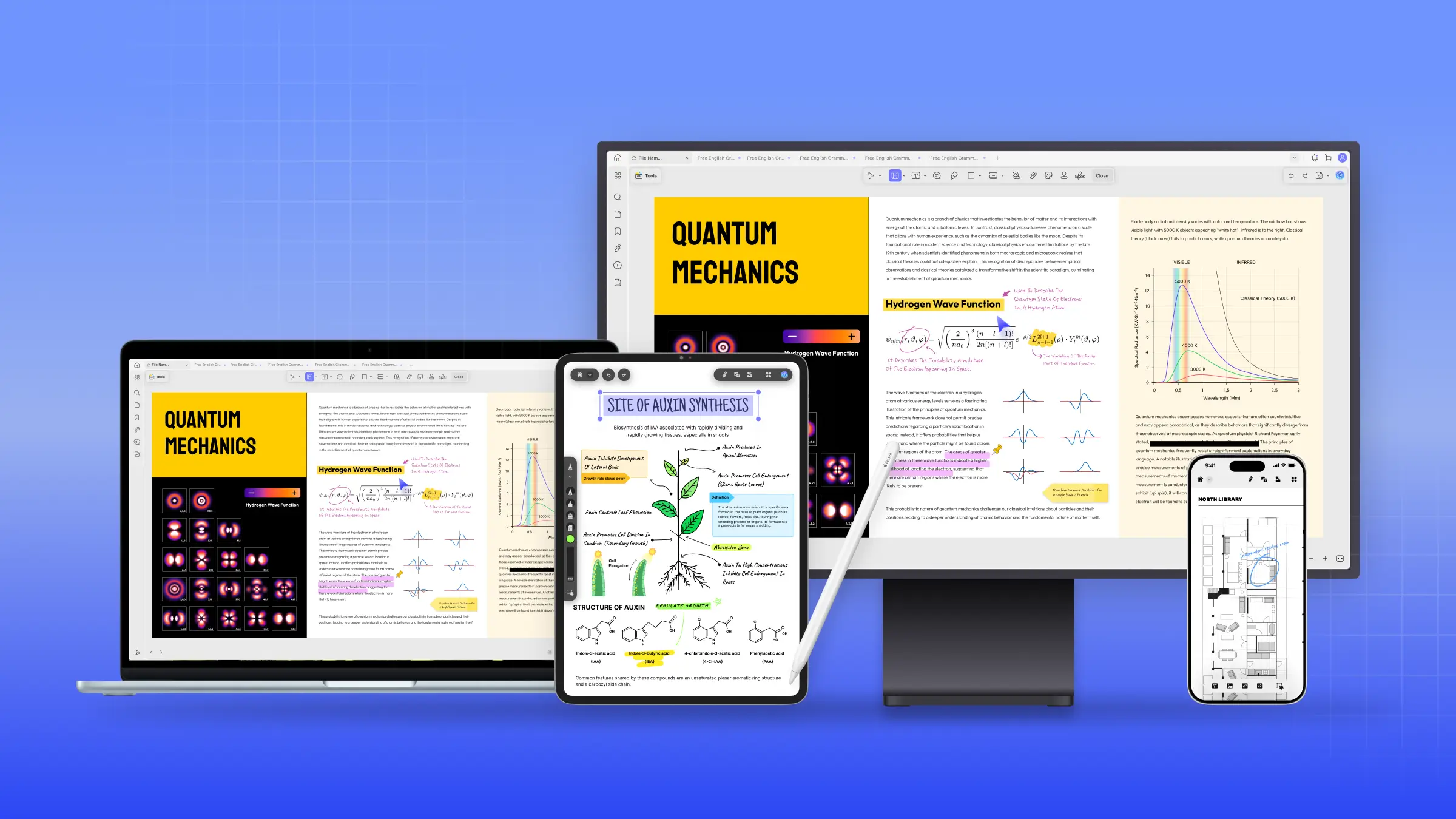

UPDF stands out as the best PDF editor tool that can help enhance AI writing capability by compiling documents much more effectively. Have a look at various key features below:

- Editing: Allows users to edit PDF documents easily and efficiently. Users can add, delete, and modify text, images, and other elements in the PDF document.

- Annotating: This enables users to annotate PDF documents with comments, highlights, and other types of markups. This feature is particularly useful for collaborative work and document review.

- OCR: UPDF's OCR function can recognize text in 38 languages, making it a valuable tool for multilingual users. This feature will also utilize NLP models such as GPT 3 vs. BERT to improve accuracy in the future.

- Cloud and Sharing Service: UPDF Cloud services allow users to securely store and share their PDF documents with other devices in the cloud. This feature lets users easily access their documents from anywhere and collaborate with others by sharing PDFs as links.

Wrapping Up

In conclusion, BERT vs. GPT are powerful NLP tools that excel at different tasks based on their architecture and training dataset size. GPT is ideal for tasks such as summarization or translation, while BERT is more advantageous for sentiment analysis or NLU. When deciding which model to use, it's essential to consider your specific needs and the task at hand.

However, if you are looking for a reliable and efficient tool to help you work with PDF documents, we highly recommend UPDF. With this tool, you can easily translate, summarize, explain, and rewrite PDFs without any hassle to streamline the document workflow.

Windows • macOS • iOS • Android 100% secure

UPDF

UPDF

UPDF for Windows

UPDF for Windows UPDF for Mac

UPDF for Mac UPDF for iPhone/iPad

UPDF for iPhone/iPad UPDF for Android

UPDF for Android UPDF AI Online

UPDF AI Online UPDF Sign

UPDF Sign IvyCraft

IvyCraft Edit PDF

Edit PDF Annotate PDF

Annotate PDF Create PDF

Create PDF PDF Form

PDF Form Edit links

Edit links Convert PDF

Convert PDF OCR

OCR PDF to Word

PDF to Word PDF to Image

PDF to Image PDF to Excel

PDF to Excel Organize PDF

Organize PDF Merge PDF

Merge PDF Split PDF

Split PDF Crop PDF

Crop PDF Rotate PDF

Rotate PDF Protect PDF

Protect PDF Sign PDF

Sign PDF Redact PDF

Redact PDF Sanitize PDF

Sanitize PDF Remove Security

Remove Security Read PDF

Read PDF UPDF Cloud

UPDF Cloud Compress PDF

Compress PDF Print PDF

Print PDF Batch Process

Batch Process About UPDF AI

About UPDF AI UPDF AI Solutions

UPDF AI Solutions AI User Guide

AI User Guide FAQ about UPDF AI

FAQ about UPDF AI Summarize PDF

Summarize PDF Translate PDF

Translate PDF Chat with PDF

Chat with PDF Chat with AI

Chat with AI Chat with image

Chat with image PDF to Mind Map

PDF to Mind Map Explain PDF

Explain PDF PDF AI Tools

PDF AI Tools Image AI Tools

Image AI Tools AI Chat Tools

AI Chat Tools AI Writing Tools

AI Writing Tools AI Study Tools

AI Study Tools AI Working Tools

AI Working Tools Other AI Tools

Other AI Tools AI Bookmark Generation

AI Bookmark Generation AI Bookmark Summary

AI Bookmark Summary AI Watermark Generation

AI Watermark Generation AI Background Generation

AI Background Generation AI Sticker Generation

AI Sticker Generation AI Stamp Generation

AI Stamp Generation AI Editing Suite

AI Editing Suite UPDF Copilot

UPDF Copilot AI Page Management

AI Page Management AI Semantic Search

AI Semantic Search PDF to Word

PDF to Word PDF to Excel

PDF to Excel PDF to PowerPoint

PDF to PowerPoint User Guide

User Guide UPDF Tricks

UPDF Tricks FAQs

FAQs UPDF Reviews

UPDF Reviews Download Center

Download Center Blog

Blog Newsroom

Newsroom Tech Spec

Tech Spec Updates

Updates UPDF vs. Adobe Acrobat

UPDF vs. Adobe Acrobat UPDF vs. Foxit

UPDF vs. Foxit UPDF vs. PDF Expert

UPDF vs. PDF Expert

Enya Moore

Enya Moore

Lizzy Lozano

Lizzy Lozano

Enid Brown

Enid Brown

Enola Miller

Enola Miller

Delia Meyer

Delia Meyer